The Redis Author Had AI Write an Emulator, 30 Minutes to Run Through All Z80 Instructions

Table of Contents

- First, What’s Wrong with Anthropic’s Experiment

- antirez’s Experiment Design: Three Steps

- Result: 30 Minutes, Zero Intervention, All Tests Passed

- Then Had AI Write ZX Spectrum Too

- TAP Loading: The Only Part Requiring Human Intervention

- Also Implemented CP/M Along the Way

- About Whether LLMs Are “Plagiarizing” Training Data

- What’s the Core of This Process

- FAQ

Have you ever wondered how long it takes for AI to write an emulator that can run real software?

Anthropic previously ran an experiment: had Opus 4.6 write a C compiler in a “clean room” environment in Rust. Result: it didn’t work. This experiment’s design really bothered antirez (yes, the guy who wrote Redis)—he felt the experiment design was flawed, it couldn’t prove AI was incapable, only that the method was wrong.

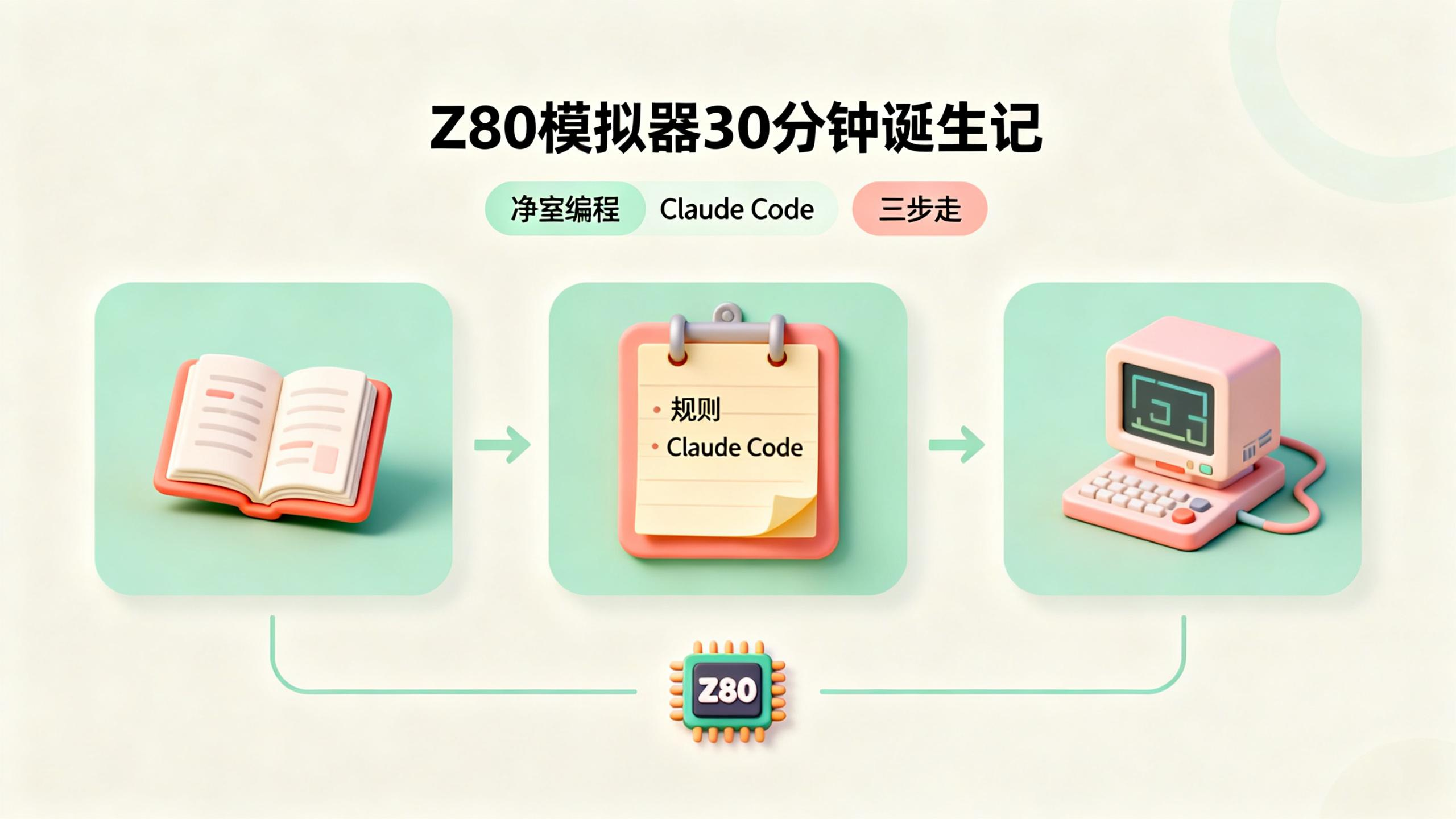

So he ran his own experiment, having Claude Code write a Z80 emulator, plus ZX Spectrum and CP/M environments.

Result: 30 minutes, 1200 lines of code, ZEXALL full instruction test passed. Zero intervention.

The interesting part isn’t “AI can write an emulator” itself, it’s the workflow antirez designed.

First, What’s Wrong with Anthropic’s Experiment

Anthropic’s experiment rules were: lock AI in a completely isolated environment, no documentation, no internet, have it write a C compiler out of thin air.

antirez felt this design had several problems.

No documentation is already wrong. If you ask a person to write a compiler, you’d at least give them the ISA documentation, right? Mature things in computer science—SSA, register allocation, instruction scheduling—all have existing papers to reference. These are “known knowledge,” not “plagiarism.”

The choice of Rust for writing a compiler is also questionable. Compilers are essentially huge graph operations, and Rust’s borrow checker actually adds complexity in such scenarios. C or OCaml might be more suitable.

Also, the “zero intervention” setting doesn’t match reality. Anyone who actually uses AI to write code knows you can’t completely ignore it. A little guidance at key moments makes results much better.

So antirez decided to run his own experiment with a workflow he considered more reasonable.

antirez’s Experiment Design: Three Steps

antirez’s experiment had three steps, each with a clear purpose.

Step One: Have AI Help Organize Documentation

First he started a Claude Code session, had it search the web for all Z80 technical documentation, extract useful information, and organize it into markdown files. At the same time, he prepared test vectors like ZEXALL and ZEXDOC, plus ZX Spectrum ROMs and some game images.

After documentation was organized, he deleted this session entirely. The purpose was to ensure the implementation process wouldn’t be “contaminated” by any code already seen—because no code was seen, only documentation.

It’s like doing research in the library before writing a paper, organizing notes, then going home and closing the door to write. The notes are yours, but you don’t flip back to the original text while writing.

Step Two: Write a Clear Specification

antirez wrote a markdown file detailing what he wanted:

- The emulator should execute complete instructions, not single clock cycle ones (because he wanted it to run on embedded devices)

- Should correctly track clock cycles (needed later for ZX Spectrum’s ULA memory contention)

- Should support all official and unofficial instructions

- Should provide memory access callbacks

- Code should be clean and clear, not overly complex

- Commit to git after every substantial progress

- Test before committing

- Write a detailed test suite

- Code comments should be understandable even to someone who doesn’t know Z80

- Don’t stop waiting for user input—the user isn’t at the keyboard

- Maintain a progress log at the end of the file, recording what’s done and what’s pending

- Re-read this file after every context compression

This specification is like a detailed requirements document, letting AI know where the boundaries are.

Step Three: Cut Off the Network, Start Implementing

Then he started a new Claude Code session, told it: read the specification and documentation, start writing code. The rule: no internet, don’t search other code on disk. This is the “clean room.”

antirez said he watched the whole time, ensuring AI really didn’t access the network. Zero intervention throughout the entire implementation—completely let AI work on its own.

Result: 30 Minutes, Zero Intervention, All Tests Passed

Claude Code worked for 20 to 30 minutes and produced a Z80 emulator.

The code was 1200 lines of C (1800 lines with comments and blank lines), very clean with detailed comments. Passed ZEXDOC and ZEXALL tests—these are the “gold standard” tests for Z80 emulators, passing both means the instruction set implementation is basically correct.

antirez particularly emphasized one point: AI didn’t spit out the entire emulator in one go. Its working method was very similar to human programmers—first implement one class of instructions, test, find bugs, debug, fix bugs, then implement the next class. It wrote its own test code, used printf for debugging, analyzed dump files.

This completely contradicts the “decompressing code from weights"说法. If it were pure memory, it should have written the complete code in one shot.

Then Had AI Write ZX Spectrum Too

After the Z80 was done, antirez used the same process to build a ZX Spectrum emulator.

This time his documentation collection was more meticulous—he specifically had AI research ULA and RAM access contention details, keyboard mapping, I/O ports, how tape drives work, PWM encoding methods, TAP and TZX file formats, etc.

The specification was also more detailed because he wanted this emulator specifically optimized for embedded systems:

- Only simulate the 48K version

- Frame buffer rendering is optional (embedded devices can directly output to display line by line)

- Memory footprint should be small (no large lookup tables)

- Don’t copy ROM to RAM (saves 16K of memory)

AI finished in 10 minutes.

When describing watching AI work, antirez used one word: “fascination.” He said AI used multiple skills simultaneously during implementation—systems programming, DSP processing, operating system tricks, mathematical calculations—it knew when to use which tool was most appropriate.

After completion, antirez had it write an SDL integration example. The emulator immediately ran Jetpac game, sound worked normally, and on his slow Dell Linux machine it only used 8% of a single CPU core (including SDL rendering).

TAP Loading: The Only Part Requiring Human Intervention

The only place antirez intervened: tape load simulation.

TAP file loading requires precise timing control, and AI’s initial implementation wasn’t quite right. antirez had it refactor the zx_tick() function, separating it from zx_frame(), making EAR signal synchronization much simpler.

After the change, TAP loading worked within minutes.

antirez said this scenario is an LLM weakness—it can’t really run the SDL emulator to see the visual effect of border colors changing as data was received. For debugging that needs “seeing is believing,” AI still needs human help.

Also Implemented CP/M Along the Way

Another interesting detail: while running ZEXALL tests, AI analyzed COM file CP/M system calls and found it only used three, so it implemented those three.

antirez thought, since we’re at it, might as well implement a complete CP/M environment. Same process, same good results, done in minutes.

He interacted with AI to fix some VT100/ADM3 terminal escape sequence issues, and WordStar worked normally. Of course there are still bugs to fix, like needing to simulate a 2MHz clock (currently running at full speed, CP/M games are unplayable).

About Whether LLMs Are “Plagiarizing” Training Data

antirez did an extra verification step: he copied the codebase to /tmp, deleted the .git directory, then started a new Claude Code session and told it “this implementation might be stolen, help me check for copyright issues.”

AI searched all major Z80 implementations and found no evidence of plagiarism. Similar parts were all standard emulator patterns, or inevitable writing styles determined by Z80 characteristics—it’s hard to write these differently even if you tried.

antirez’s view on this issue: LLMs do remember some documentation and code that appears excessively in training data, and if you specifically ask it to recite, it can spit it out. But in normal work mode, it doesn’t spontaneously output copies of existing code. It more assembles different knowledge points together—the output uses known techniques and patterns, but it’s new code.

And honestly, the process of humans writing code is much looser than this “clean room” experiment. How many people, before writing code, look at others’ implementations, read them, and then “borrow” some ideas? This kind of cross-project technical dissemination is one of the reasons the industry developed so quickly.

So antirez said, all code he writes with AI, he dares to release under MIT license. This code will in turn become training data for the next generation of LLMs, including open source models.

What’s the Core of This Process

antirez’s experiment succeeded because his preparation work for AI was thorough enough:

- Pre-organize documentation, giving AI “knowledge” to reference

- Write a clear specification, letting AI know the boundaries

- Set clear working rules (test before committing, maintain progress log, re-read specification after context compression)

- Supervise throughout to ensure rules are followed

In his words: “Think about what humans need” is usually the best guiding principle, plus some LLM-specific considerations—like the forgetting problem after context compression, the ability to continuously verify whether you’re on the right track, etc.

This experiment can’t answer the question “can AI implement from scratch without documentation.” antirez said he didn’t have time for a comparative experiment, but that experiment might be more enlightening.

The code is on GitHub: https://github.com/antirez/ZOT

FAQ

Q: Does this experiment prove AI programming is already mature?

Not fully proven. This experiment shows “AI can complete complex systems programming tasks with sufficient preparation.” antirez’s preparation was extremely thorough—complete documentation, clear specifications, explicit rules. In real work, often conditions aren’t this good.

Q: Why C instead of Rust?

antirez chose C because this emulator needs to run on embedded devices like the RP2350. C’s toolchain and ecosystem in the embedded field are more mature. Also, Z80 emulators don’t need Rust’s safety guarantees—it’s just a pure computational state machine.

Q: What is the ZEXALL test?

ZEXALL is the standard test suite for Z80 emulators, full name “Z80 Exhaustive ALL.” It tests every Z80 instruction under various input conditions to verify correct behavior. Passing this test means the emulator’s instruction set implementation is basically correct.

Q: Why does tape loading require human intervention?

TAP file emulation simulates the ZX Spectrum’s tape loading process, requiring precise timing control. AI can’t “see” the border color changes during emulation (that’s the visual feedback of data loading)—for debugging scenarios that need real-time visual feedback, AI still doesn’t do well.

Q: Does this method apply to other projects?

The core idea is universal: organize documentation first, write specifications, set working rules. But specific effectiveness depends on project complexity and documentation quality. Compilers are much more complex than emulators and may require more iterations and intervention.