Your AI Agent Can Think, But It Can't Remember

Your AI agent wrote some code for you today. Ask how it looks, and it’ll explain in detail. Ask again tomorrow, and it gives you a blank stare—where did that code go? It doesn’t remember.

This isn’t a bug. It’s an architecture problem.

Smart Agents, Terrible Memory

If you’ve been building AI agents, you’ve probably noticed this pattern: impressive during the conversation, a complete stranger after it ends. Ask it to store some data, and it asks you “where should I put it?” Two agents working on the same files? At best they overwrite each other. At worst, data corruption.

And that’s before we get to running code. Tell an agent to “run this script” and you’re always half-worried it’ll hit you with an rm -rf /.

Agent reasoning is improving every day. But its operational support system has been ignored.

Your Tech Stack Probably Looks Like This

Building agent infrastructure, most people’s list reads something like:

- Neon or Supabase for the database

- Mem0 or Zep for memory

- Pinecone or pgvector for vector search

- S3 for files

- E2B for sandboxed execution

Five or six services, held together with glue code.

It works. Kind of. Until the agent’s memory can’t query its own database. Until the sandbox can’t read the agent’s files. Until the glue breaks at 3am.

Ghost’s Approach: Use Databases Like Git Branches

Ghost argues that agents don’t need traditional databases—they need disposable, on-demand workspaces.

Spin up a database the way you create a git branch. Do the work. If it’s good, keep it. If not, discard it. Fork before a risky migration. Experiment on the fork. Merge or discard.

The database stops being part of the underlying infrastructure and becomes part of the workflow itself.

Ghost connects via the MCP protocol. An agent just runs ghost mcp install and discovers it like any other tool. No UI, no configuration wizard. Unlimited databases, 1TB storage each, free.

Every Ghost database is native PostgreSQL. Agents don’t need to relearn anything—schema design, SQL queries, indexing, debugging—all LLM weights already contain postgres knowledge.

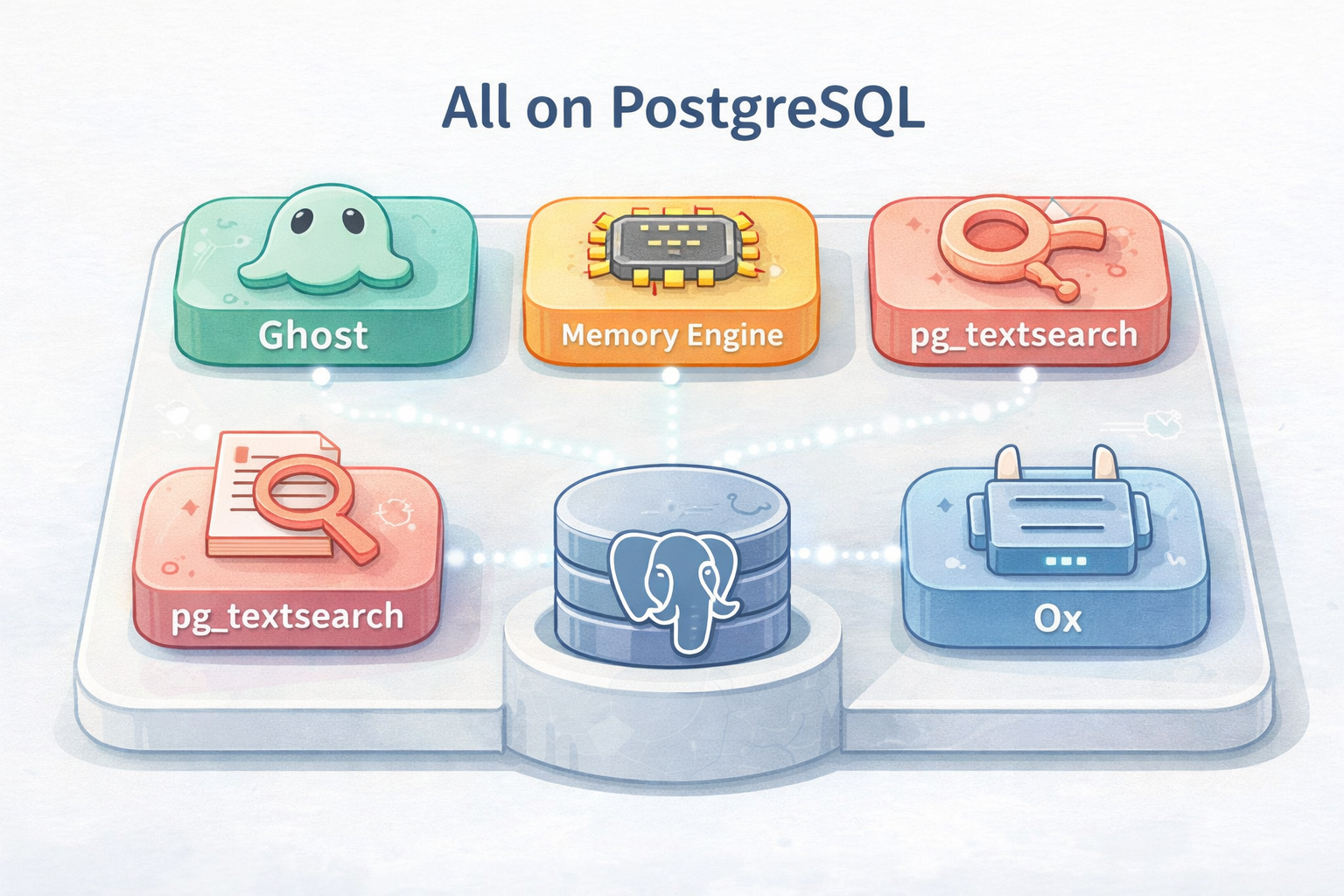

The Toolchain, All Running on PostgreSQL

Ghost does one thing, but paired with a suite of tools, it forms a complete agent memory and execution layer. The key point: everything runs on PostgreSQL, no extra external systems needed.

Memory Engine: Tracks “What the Agent Knew When”

Agent memory resets after every session. You might have tried vector databases or standalone memory services to solve this.

Memory Engine does this inside PostgreSQL. Paired with Ghost, agent memory and data live in the same space. Querying memory with SQL becomes reality.

More importantly, it has native temporal support. Most memory systems treat memory as a flat store—things go in, things come out, no tracking of what happened in between. Memory Engine tracks when facts were true, when they changed, when old information was superseded.

This mirrors how human memory works—you remember “housing prices rose in 2024” and also remember “they fell back in 2025.” The two memories don’t overwrite each other.

On this foundation, keyword search, semantic search, faceted search, and hierarchical search all run in a single SQL query.

The underlying technology is pg_textsearch—it combines BM25 keyword precision with pgvector semantic similarity. Every Ghost database ships with it, no Elasticsearch deployment or vector sync needed.

TigerFS: Use PostgreSQL as a Filesystem

Agents constantly generate files: reports, code, datasets, logs. The standard approach is dumping everything into S3, but S3 files are disconnected—they can’t participate in transactions, can’t be queried, can’t guarantee concurrent write consistency.

TigerFS stores files in PostgreSQL, making them first-class database citizens. Writes come with transaction guarantees, concurrent access won’t corrupt, multiple agents can read and write the same project without stepping on each other.

Ox: Sandboxed Execution with Full Context

Agents need to run code. Ox provides a sandboxed execution environment isolated from the main branch, but this sandbox connects directly to the agent’s database and TigerFS filesystem.

Code doesn’t run in a vacuum—it sees everything the agent knows: historical memory, relevant data, file context.

Real-World Usage Examples

Ghost’s team shared what beta testers built:

The code review agent: picks up a PR, forks the database for a clean snapshot, runs the test suite in the Ox sandbox to check for regressions. Queries Memory Engine to see past reviews on the same files—what broke last time, what patterns the team prefers. Stores results in TigerFS, updates its own memory.

The research agent: spins up a Ghost database for each research project to store findings. Writes report drafts as Markdown files into TigerFS. Checks Memory Engine to see if this company was researched before—what was found last time. Archives findings with full temporal context. Next quarter, the agent knows what the landscape looked like.

Multi-agent collaboration: three agents working together, one writes code, one writes docs, one runs tests. All three read and write files through TigerFS without interference. All three check Memory Engine to avoid duplicating work. All three have their own Ox sandboxes that can still access the shared Ghost database. Shared substrate, but no shared failure modes.

Why PostgreSQL

The Ghost team worked on TimescaleDB for years—TimescaleDB is one of the most widely-used PostgreSQL time-series extensions. Memory Engine’s temporal capabilities come directly from a decade of that engineering work.

The reason to choose PostgreSQL is brutally practical: it’s been proven in production for over 30 years, handling transactions, concurrency, replication, and failure recovery with ease. Agent infrastructure needs a stable foundation, and stable means boring—boring things are reliable.

When database, memory, search, and files all run on the same PostgreSQL, they naturally share the same transaction model, the same authentication, the same query language. That “glue layer” was never needed in the first place.

Getting Started

Ghost is in early access. Install with:

curl -fsSL https://install.ghost.build | sh

Run ghost mcp install directly in Claude Code, and agents can start using this toolchain immediately.

The full toolkit:

- Ghost → Instant, ephemeral databases for agents

- Memory Engine → Persistent, temporal agent memory

- pg_textsearch → BM25 + vector hybrid search

- TigerFS → PostgreSQL-backed filesystem

- Ox → Sandboxed execution connected to your data

All running on PostgreSQL, all MCP-native. Each works standalone, better together.

FAQ

What’s the difference between Ghost and traditional database services?

Traditional databases require careful sizing, cloud provider selection, storage planning, and ongoing maintenance. Ghost databases are created on demand and can be discarded after use—they don’t occupy your ops bandwidth. Every Ghost database is native PostgreSQL, so you don’t need to learn a new query language.

Why build everything on PostgreSQL?

PostgreSQL has been proven in production for thirty years with mature transaction processing, concurrency control, and failure recovery. When database, memory, search, and files all run on the same system, they naturally share the same transaction model and authentication—no extra glue code needed.

How does Memory Engine’s temporal memory actually work?

Most memory systems store information and retrieve it, not tracking what happened in between. Memory Engine tracks when each piece of information was true, when it changed, and when it was superseded. You can ask “what did the agent know about this user at 3pm on Tuesday?” and get an answer, because time is part of memory itself.