Did Claude Opus 4.7 Secretly Raise Prices? 497 Developers Reveal the Truth

Have you ever opened your utility bill at the end of the month, only to find it jumped by nearly 40%? You dig through every notice, and realize the rate changed weeks ago. You just didn’t see it.

That’s exactly how a lot of engineers using Claude Opus felt this week.

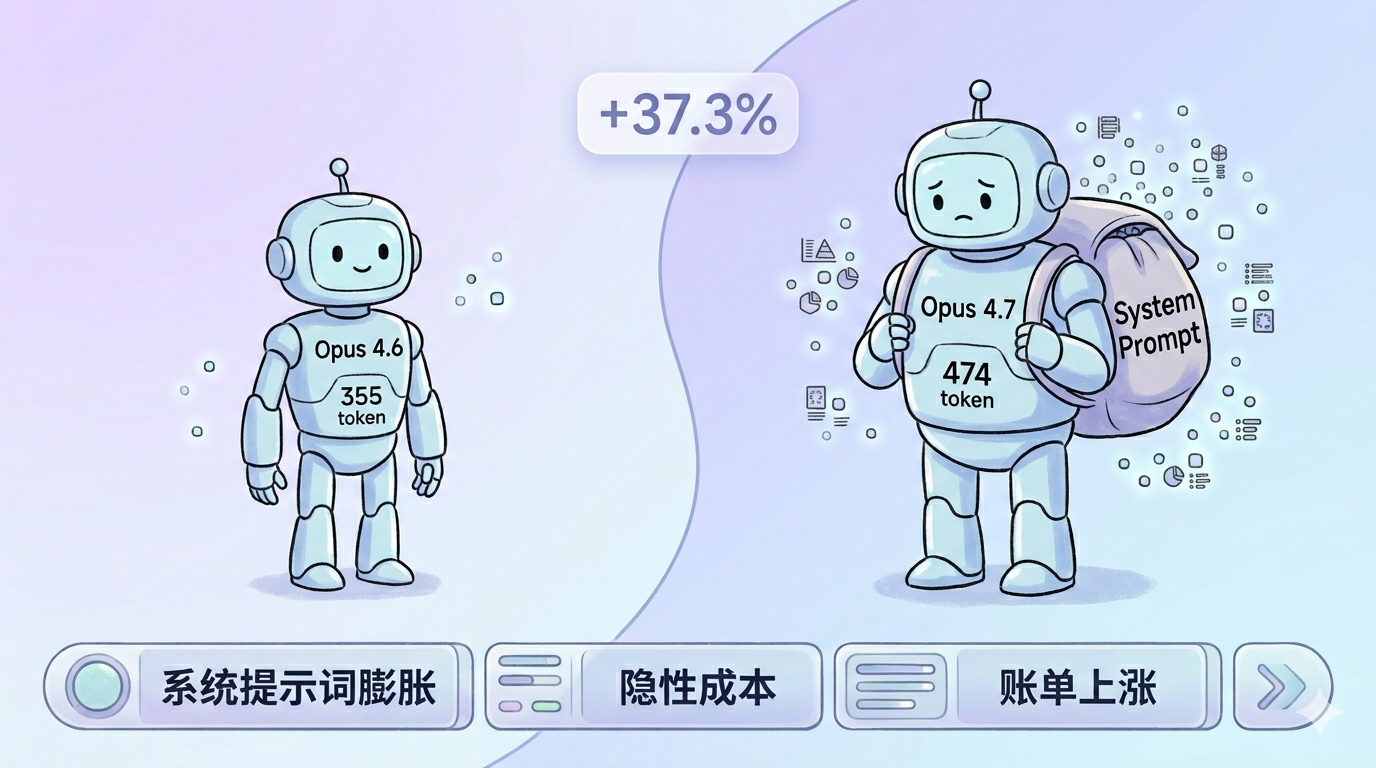

It started with an unassuming link on Hacker News. Someone built a site called Tokenomics that anonymously collects token usage data from developers. I clicked through and skimmed the numbers. After aggregating 497 submissions, one figure stood out: Opus 4.7 uses 37.3% more request tokens on average than 4.6.

Not 37% better performance. 37% more usage. So if your monthly bill was a thousand dollars, it’s now pushing fourteen hundred, and the Anthropic pricing page doesn’t mention this anywhere.

The Numbers Don’t Lie: 497 Samples Testify

The design of this comparison site is clever. It has developers send the exact same request to Opus 4.6 and 4.7, then anonymously report the token count for both. No lab environment. No cherry-picked benchmarks. Just real inputs from real workflows.

Those 497 samples cover pretty much every use case you can think of: hundred-line code reviews, natural language requirement descriptions, complex system architecture prompts.

The aggregated results are remarkably consistent:

- Average request token increase: +37.3%

- Average cost increase: +37.3%

- Average request size: from 355 tokens to 474 tokens

Some individual cases are even more extreme. One comparison showed the same request costing 8 tokens on 4.6 and 12 on 4.7, a 50% jump. Another went from 28 to 54 tokens, essentially doubling. There were a few lucky ones with almost no change, but they’re the minority.

Why Did Tokens Increase? Blame the System Prompt

If you only looked at the numbers, you might think Anthropic quietly changed the pricing. But it’s not the unit price that went up, it’s the consumption.

It’s like going to a gas station where fuel prices haven’t changed, but your new car suddenly gets worse mileage. The problem isn’t the pump, it’s the engine tuning.

Later that same day, independent developer Simon Willison published a post that identified the culprit: Opus 4.7’s system prompt is nearly twice as long as 4.6’s.

The system prompt is essentially the model’s factory settings. It tells the model how to talk, how to think, and what rules to follow. The 4.7 instruction manual is noticeably thicker, packed with detailed constraints about tool usage, code execution, and safety boundaries.

These constraints aren’t bad, they actually make the model more reliable. But the trade-off is that before every conversation, the model has to read through this longer manual first. And those manual tokens? They all count toward your request header.

The Hidden Cost of Opus 4.7: What It Means for Your Bill

Let’s run some real numbers.

Say your project sends a thousand requests to Opus daily, with an average input plus output of 4,000 tokens per request. At Anthropic’s current Opus pricing, that’s $15 per million input tokens and $75 per million output tokens.

In the 4.6 era, if input is one-third and output is two-thirds, your daily cost looks like:

- Input: 1.33M x $15 = $20

- Output: 2.67M x $75 = $200

- Total: $220/day

Switch to 4.7. If input tokens bloat by 37% due to the system prompt while output stays flat, your cost becomes:

- Input: 1.82M x $15 = $27

- Output: 2.67M x $75 = $200

- Total: $227/day

Looks like only $7 more? Hold on. The system prompt bloat doesn’t just affect your input, the model’s reasoning process might get longer too. If output also increases, even by just 10%, that’s an extra $20 per day, nearly $8,000 per year, enough to hire an intern for two months.

Your Claude Bill Went Up? Three Immediate Fixes

First, break down your bill.

Don’t just stare at the monthly total. Use the Anthropic console or your own logs to separate input and output tokens per request. That way you’ll know whether it’s “input inflation” or “output失控” before deciding what to fix.

Second, put your requests on a diet.

If you’ve confirmed the system prompt is bloating your input, there’s one thing you can do at the application layer: trim your context before sending. Don’t make the model carry thirty rounds of conversation history. Summarize what needs summarizing, discard what needs discarding.

Third, run an A/B test.

If your business isn’t picky about model versions, route some traffic back to 4.6 for a week and compare cost versus performance. 4.7 does show improvement in coding ability and reasoning depth, but if your use case is just simple classification or formatting, that premium might not be worth paying.

Claude Opus 4.7 Upgrade FAQ

Q: Has Anthropic officially acknowledged this?

Not specifically about token increases. But from the publicly available system prompt comparisons, this looks more like a side effect of adding features, not a pricing strategy change.

Q: Will every user feel the 37% increase?

Not necessarily. Thirty-seven percent is just the community average. If you’re throwing ten-thousand-token codebases at it every time, a few hundred extra system prompt tokens won’t even register. But if you’re sending short messages, the spike is glaring.

Q: Do Sonnet and Haiku have the same problem?

Most community testing has focused on Opus. Sonnet 4.7’s system prompt also got longer, though not as dramatically. Haiku is designed to be lightweight with a short prompt to begin with, so the impact should be minimal.

Final Thoughts

Here’s the thing about model upgrades. We habitually look at benchmark accuracy scores first, rarely noticing that token consumption also crept up by a few percent. Four hundred ninety-seven anonymous data points are telling us something simple: choosing a model isn’t just about capability, you have to do the math on cost too. And some costs don’t show up directly on the bill.

Have you checked your Claude bill lately? Notice anything unusual? Let’s talk in the comments.

By the way, here’s an AI content operations tool I use regularly, covering content formatting, asset processing, and productivity boosts. Makes running a blog much easier.

I’ve been playing with new Claude Code features lately and found their official Codex enhanced portal , which bundles various intelligent coding capabilities in one place. Worth a look if you’re interested.

If you want more tool reviews and AI programming hands-on content, follow my blog “Mengshou Programming” for weekly updates.