LLM

5 posts

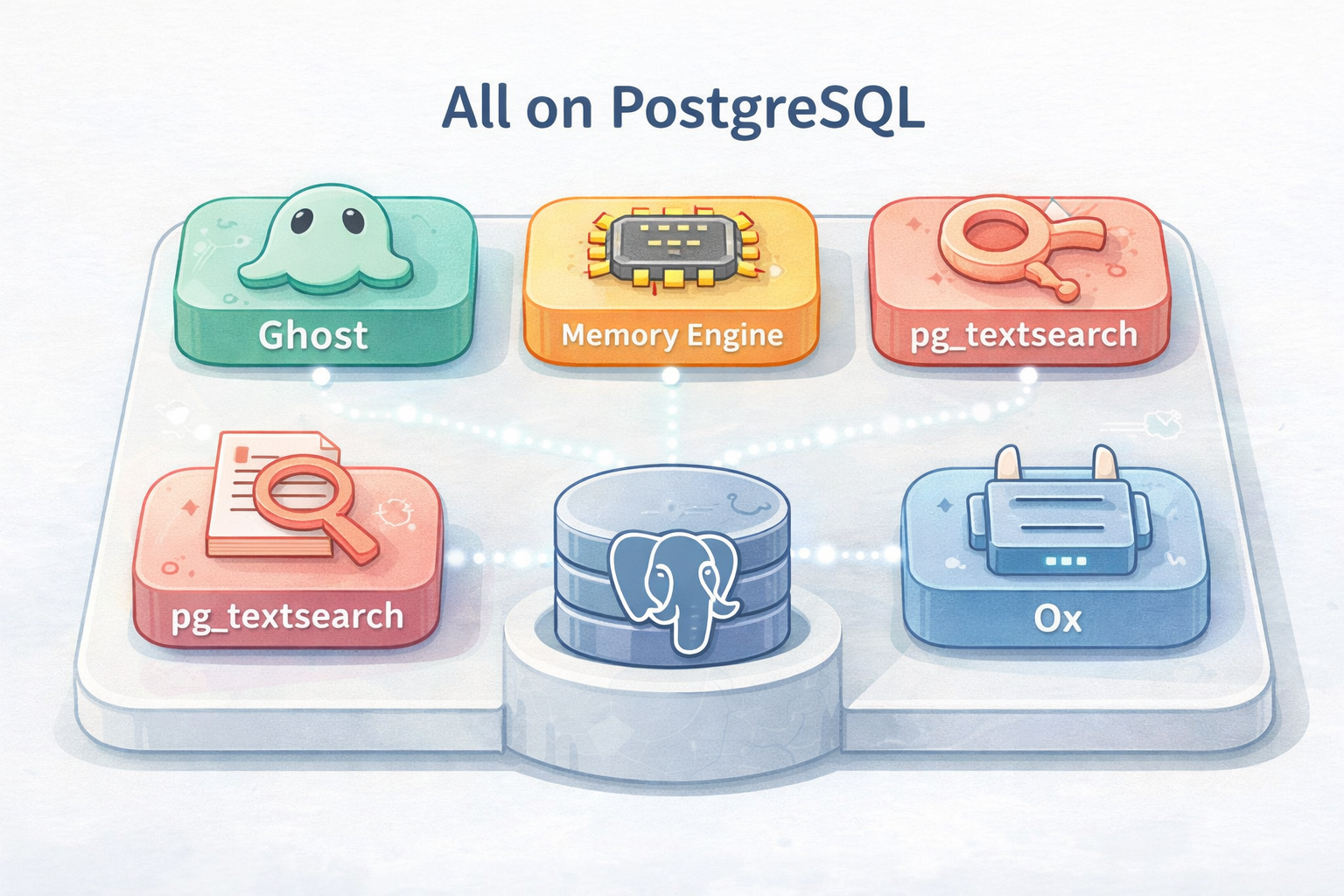

Your AI Agent Can Think, But It Can't Remember

AI agents can reason, plan, and converse—but forget everything once the session ends. The Ghost project solves this with a pure PostgreSQL-based infrastructure, turning the database into the agent’s memory palace.

Cramming a 400B Model into 48GB: The Magic Behind LLM in a Flash

An Apple paper from 2023 made it possible to run a 400 billion parameter model on an ordinary MacBook. The core technologies—MoE and quantization—hide an engineering philosophy built around on-demand loading.

90 Seconds of Waiting, Gone: How oMLX Buries Ollama on Mac

oMLX is built for Apple Silicon, using the MLX framework, SSD-backed KV cache, and continuous batching to cut TTFT from 90 seconds to 1-3 seconds in long-context scenarios, comprehensively outperforming Ollama.

Don't Build a Thousand Agents: How Ramp Automates Finance with One Agent

Ramp, America’s fastest-growing enterprise finance platform valued at $32B with 50,000+ customers and $100B+ in annual transaction volume, chose a ‘one Agent + a thousand skills’ architecture over building many agents. This is a deep dive into Ramp’s AI实战经验.

Karpathy's Latest Work: Complete GPT in 200 Lines of Code - The Most Adorable AI Tutorial

Andrej Karpathy has done it again! This time he implemented a trainable, inferable GPT model in just 200 lines of pure Python with no dependencies. This might be the most concise large language model implementation ever.