Mistral Forge Deep Dive: The Nuclear Weapon for Enterprise Fine-tuning

Spent 3 hours reading the official documentation, here’s my take.

The Problem Enterprises Face with AI

Imagine you’re a tech lead at a mid-sized company. You want AI to help with things like:

- Writing code that understands your company’s internal APIs

- Customer service that answers questions about your products

- Document Q&A that grasps internal terminology

Here’s the thing: all AI models on the market are trained on public data. They’re great at general tasks, but they know nothing about your company’s internal systems. They don’t know your code standards, your business processes, or the “unwritten rules” that only veteran employees understand.

So what do you do? Fine-tuning.

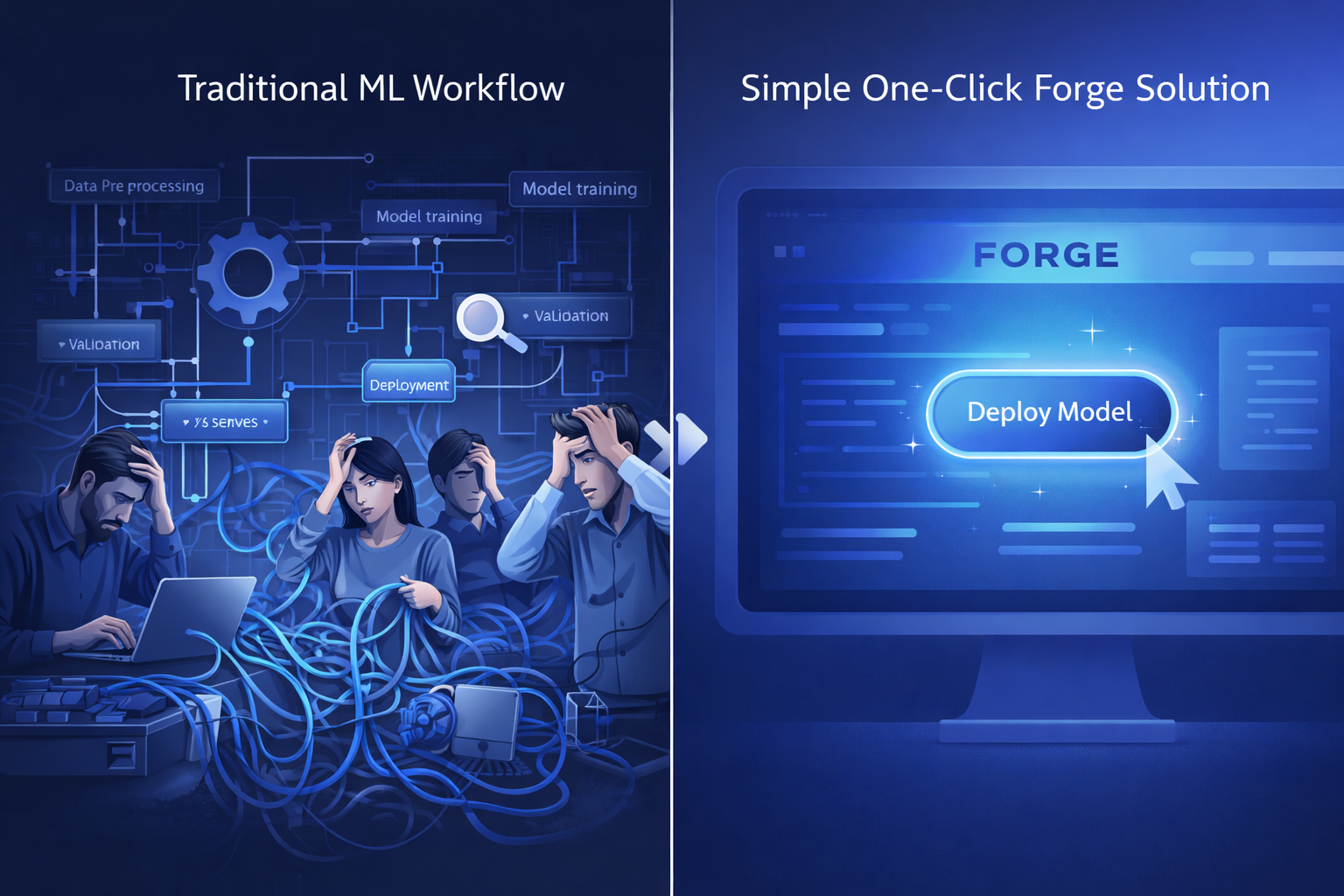

Then you realize fine-tuning is a nightmare:

- Clean your internal data first

- Choose a base model

- Get some GPUs

- Tune hyperparameters (learning rate, batch size, etc.)

- Run training - hours to days

- Evaluate - if it fails, start over

- Deploy to production

Every step is a trap. Small and medium companies simply can’t afford it.

What Forge Wants to Do

In short, turn the entire pipeline above into a “one-click service.”

Enterprises only need to do two things:

- Feed data (documents, code, chat logs)

- State requirements (I need a customer service AI)

Everything else - data cleaning, model training, hyperparameter tuning, deployment - Forge handles it all.

And it’s not a one-time thing. The emphasis is on “continuous improvement” - regulations change, systems update, new data becomes available. Forge supports continuous model adjustment through reinforcement learning based on feedback, not just a single training run.

Interesting Technical Points

MoE Architecture

Forge supports Mixture of Experts. What’s the concept?

Think about your customer service team:

- One person handles technical issues

- Another handles after-sales

- Someone else handles complaints

When a customer asks a technical question, the technical specialist handles it. For complaints, the complaint specialist comes in.

That’s MoE. A model contains multiple “experts,” and at runtime only calls the relevant ones. When not needed, experts take a break, no resources wasted.

Could save money, might work better. We’ll see.

Three Training Methods

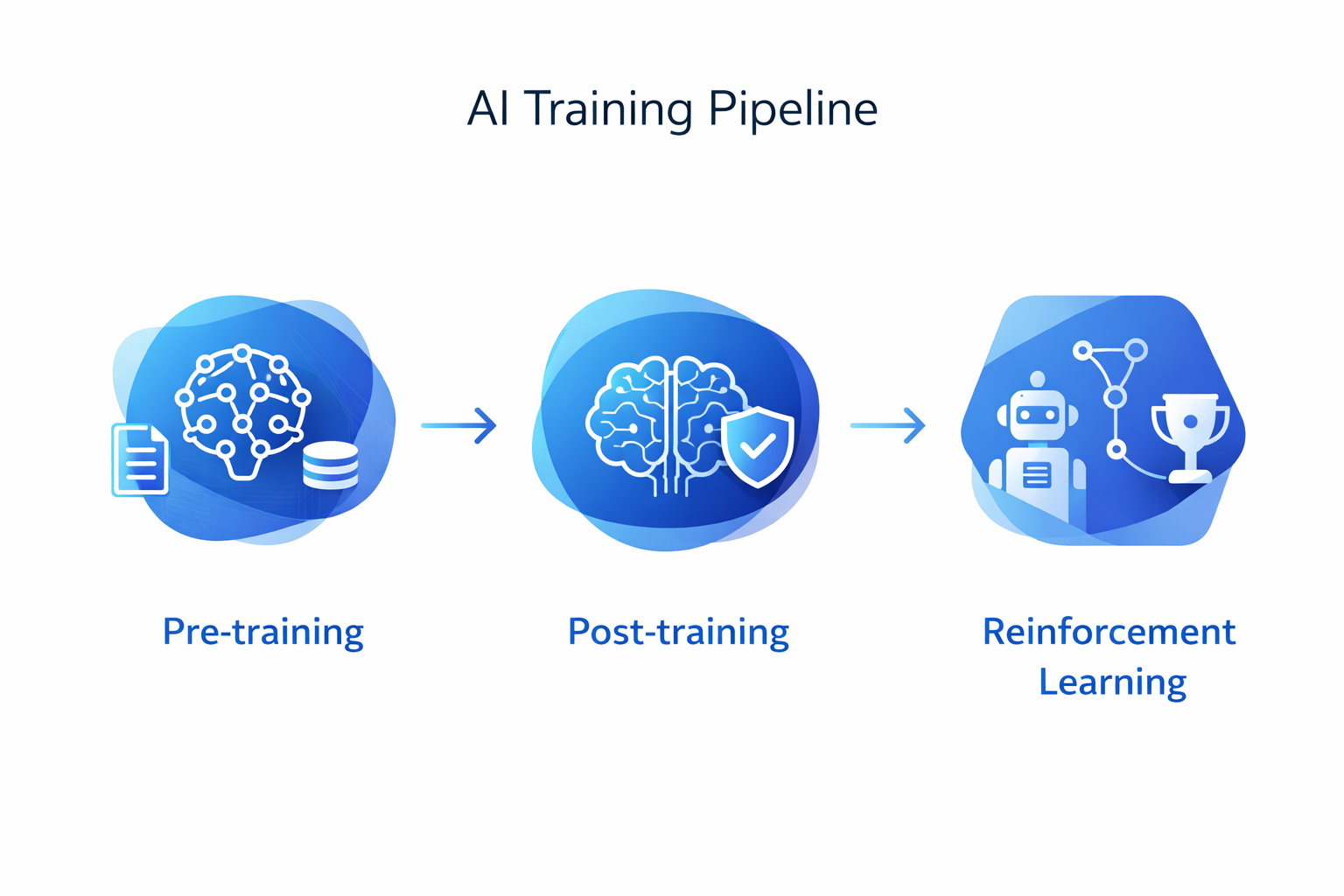

The original document spends a lot of space on the training process. Let me translate:

Pre-training: Throw a bunch of internal data at it to establish basic understanding.

Post-training: Fine-tune for specific tasks. For example, take the same model and want it specifically for code review, or specifically for customer service.

Reinforcement Learning: This is the part I find most interesting.

Traditional fine-tuning teaches models to “answer correctly.” Forge’s reinforcement learning can train using company internal standards:

Correct answer: +1.0

Uses company terminology: +0.5

Follows security guidelines: +0.3

Response timeout: -0.5

Hallucination: -1.0

In other words, it doesn’t just check if answers are right - it also checks if they follow company rules. Much more interesting than just tuning parameters.

Agent-first Design

The original document emphasizes: Forge is designed for Agents, not human designers.

The exact quote: “Code agents are becoming the primary users of developer tools, so we built Forge for them first.”

What does this mean? In the future, AI might train AI. An Agent called Mistral Vibe can use Forge itself:

- Auto-tune parameters

- Auto-generate training data

- Auto-run evaluations

It makes itself stronger.

When I read this, my thought was: Do we still need humans to train models?

Customize Models with Plain English

This is interesting. Forge claims “anyone, including Agents, can customize a model just by writing plain English.”

No code, no ML knowledge needed - just speak and you can train your own AI. The barrier is indeed lowered.

Partners

The official list of partners:

- ASML (chip giant)

- DSO (Singapore defense lab)

- Ericsson

- European Space Agency

- HTX (Singapore Tech Agency)

- Reply (Italian consulting firm)

All big enterprises. Can SMEs afford it? Not a word about pricing.

My Take

The Good:

- Lowering the barrier is real. Companies don’t need an ML team anymore

- Data doesn’t go to OpenAI, more secure

- MoE direction is right - saves money, potentially better

- “Continuous improvement” philosophy is correct - not a one-time training

The Concerns:

- All official cases are ASML, Ericsson level. Can SMEs afford it? No word on pricing

- Can the ecosystem compete with LangChain, LlamaIndex? Hard to say

- Claims of lowered barrier, but to what extent? Nobody knows

- “Plain English customization” sounds idealistic - actual results questionable

Who Is This For?

| Role | Recommendation |

|---|---|

| Big enterprises | Highly recommended |

| AI startups | Worth a try |

| Individual developers | Wait and see |

| Regular users | Not for you |

Currently requires application: https://mistral.ai/enterprise

What do you think? Is enterprise fine-tuning a real need or just big tech hype?

Drop your thoughts in the comments.

Follow 「全栈之巅-梦兽编程」 for more

#AI #Mistral #Fine-tuning